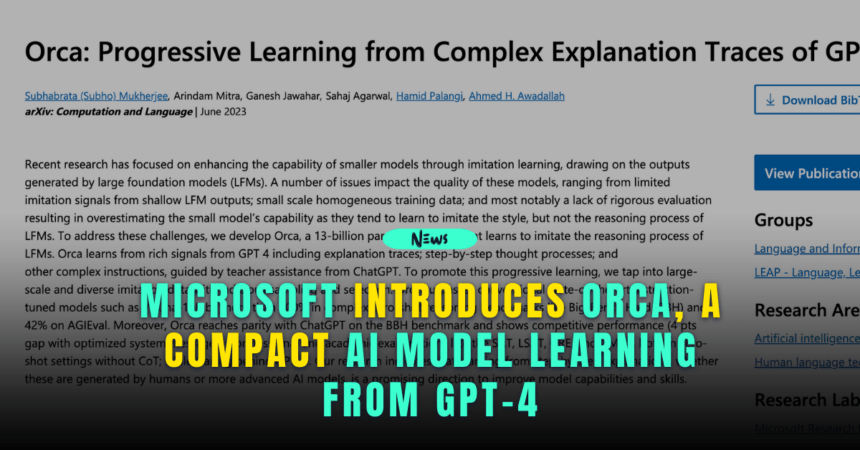

Microsoft, in collaboration with OpenAI, has launched Orca, a novel AI model that imitates and learns from larger language models, including the widely recognized GPT-4.

Orca is a Powerful Efficient AI Model

Microsoft Research has announced its new AI model, Orca, designed to mimic the learning and reasoning processes of expansive foundational models like GPT-4.

However, the unique feature of Orca is its size – it’s a compact model powered by 13 billion parameters, significantly smaller than its larger counterparts.

.@Microsoft just released a new #LLM model called Orca, a 13B Language model that has been trained on (Large Foundation Models) like #GPT4! ????#Orca crushes state-of-the-art instruction-tuned models such as #Vicuna-13B, showing an improvement of over 100% on BigBench Hard! pic.twitter.com/xbJnO7OcDu

— BadTech Bandit ∞ #AI, #drones, web3 & beyond (@BadTechBandit) June 20, 2023

The benefit of a smaller model is its efficiency – Orca requires fewer computing resources to function, allowing researchers to tailor their models to specific needs without relying on large data centers.

Orca’s Learning Process and Performance

Orca is not just a smaller AI model; it’s an efficient learner too.

Orca’ learns from rich signals from GPT-4, including explanation traces and complex instructions. It also learns from step-by-step thought processes, guided by teacher assistance from ChatGPT.

.@Microsoft just released a new #LLM model called Orca, a 13B Language model that has been trained on (Large Foundation Models) like #GPT4! ????#Orca crushes state-of-the-art instruction-tuned models such as #Vicuna-13B, showing an improvement of over 100% on BigBench Hard!

— DataChazGPT ???? (not a bot) (@DataChaz) June 19, 2023

????↓ pic.twitter.com/JVifPESxSD

Microsoft employs large-scale, diverse imitation data to promote progressive learning with Orca.

Performance-wise, it has already exceeded Vicuna, its predecessor, by 100% on challenging zero-shot reasoning benchmarks like Big-Bench Hard (BBH).

The new model is also reported to be 42% faster than conventional AI models on AGIEval.

Despite being a smaller model, Orca holds its own when it comes to reasoning abilities. It shows comparable performance with ChatGPT on benchmarks like BBH.

It also competes well in academic examinations such as SAT, LSAT, GRE, and GMAT, although it still trails behind GPT-4.

Looking Forward

Microsoft’s research team envisions that Orca will continue to evolve, learning from human-generated explanations and advanced language models.

They expect the model’s skills and capabilities to improve over time, providing a more efficient alternative to larger models while maintaining competitive performance levels.